Reef DJ

Reef DJ is a proof-of-concept developed within CORPUS to explore a lightweight, interactive approach to AI music generation.

It was heavily inspired by Google’s MusicFX DJ. The point was to see whether a similar kind of live, prompt-driven music interaction could be built without relying on proprietary closed models. Instead of waiting for a full track to render, Reef DJ continuously generates short musical segments in real time and streams them straight into the browser for uninterrupted playback.

Core idea

Reef DJ was built to show that interactive AI music systems do not necessarily need very large models or heavy cloud infrastructure to feel responsive and musically playful. In this case, the underlying generation approach was based on Meta’s MusicGen, which also made the prototype interesting from a research perspective: it suggested a path toward training and adapting this kind of system on our own material rather than treating the model as a fixed black box.

The project focuses on immediacy, controllability, and a clear performance metaphor.

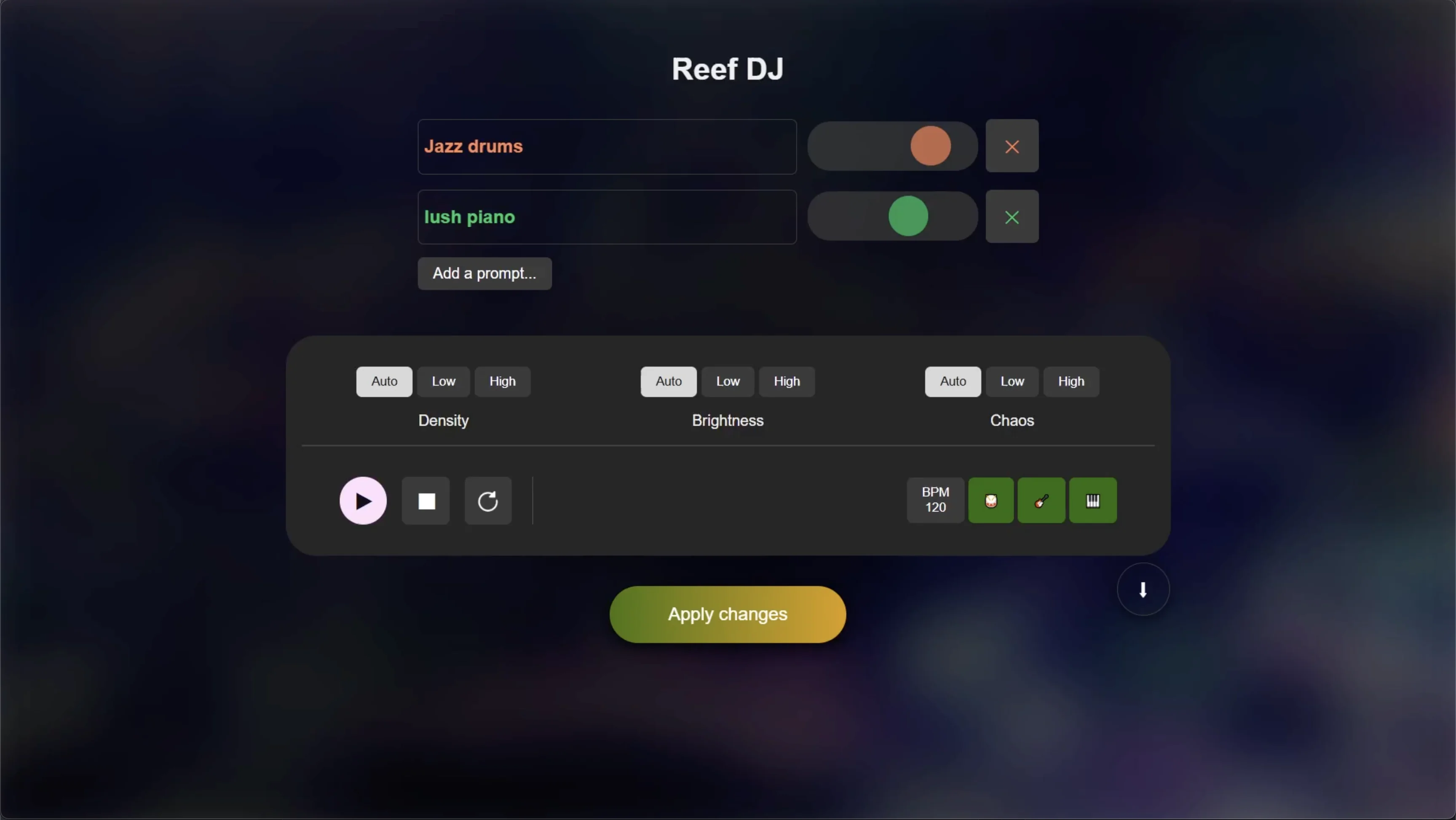

At the interface level, it behaves a bit like a speculative AI DJ deck:

- you build up the generation prompt from multiple weighted text ideas

- you shape the overall musical character with high-level controls

- the system keeps streaming fresh music in short successive chunks

- stems can be manipulated in real time while playback continues

That makes the interaction feel less like submitting a prompt and more like steering a live evolving mix.

What it does

The prototype supports several performance-oriented features:

- prompt-weighted mixing, where multiple text prompts can be blended with slider-based emphasis

- high-level creative macros for overall density, brightness, and unpredictability

- tempo guidance through a direct BPM control

- continuous real-time playback in the browser

- stem-based remixing, with separate control over drums, bass, and the remaining musical material

- rolling audio capture, allowing the most recent minute of output to be downloaded

Because the system works with a rolling musical context rather than isolated one-shot outputs, it can produce longer developments and gradual stylistic shifts. Sometimes those transitions are surprisingly convincing, and sometimes they expose the model’s limits in a very audible way.

Motivation

What interested me about this project was getting a hands-on feel for the generation model itself, and also building the interaction design around it. Reef DJ asks what happens when AI music generation is treated as a responsive musical instrument rather than a series of text-to-music prompts.

That opens up a set of questions:

- how direct can prompt-based musical control feel?

- what kinds of abstractions are useful in a performance setting?

- how can generated music remain fluid enough for mixing and intervention?

- what is the smallest practical setup that still feels musically responsive?

Within my broader work, Reef DJ sits somewhere between creative tooling, generative music systems, and performance interface design.

Demo

Context

This project emerged as I went further down the rabbit hole of audio-domain music generation and started building a series of practical prototypes around it. It also became a natural bridge between my earlier XR music work and my then recent role at CORPUS, being one of the first prototypes I built for the project.