Mixed Reality Musical Interface: Exploring Ergonomics and Adaptive Hand Pose Recognition for Gestural Control

This project was my first full XR musical instrument prototype study during the PhD. It became the foundation for much of the later work in Netz, multimodal hand tracking, and interactive machine learning for musical XR.

The paper, published at NIME 2022, presents an early mixed reality musical interface (MRMI) built for the Microsoft HoloLens 2. The core idea was to investigate how musicians would actually respond to a virtual instrument arranged in physical space: how large it should be, how gestures should work, and whether hand-pose recognition could become musically useful rather than just technically novel.

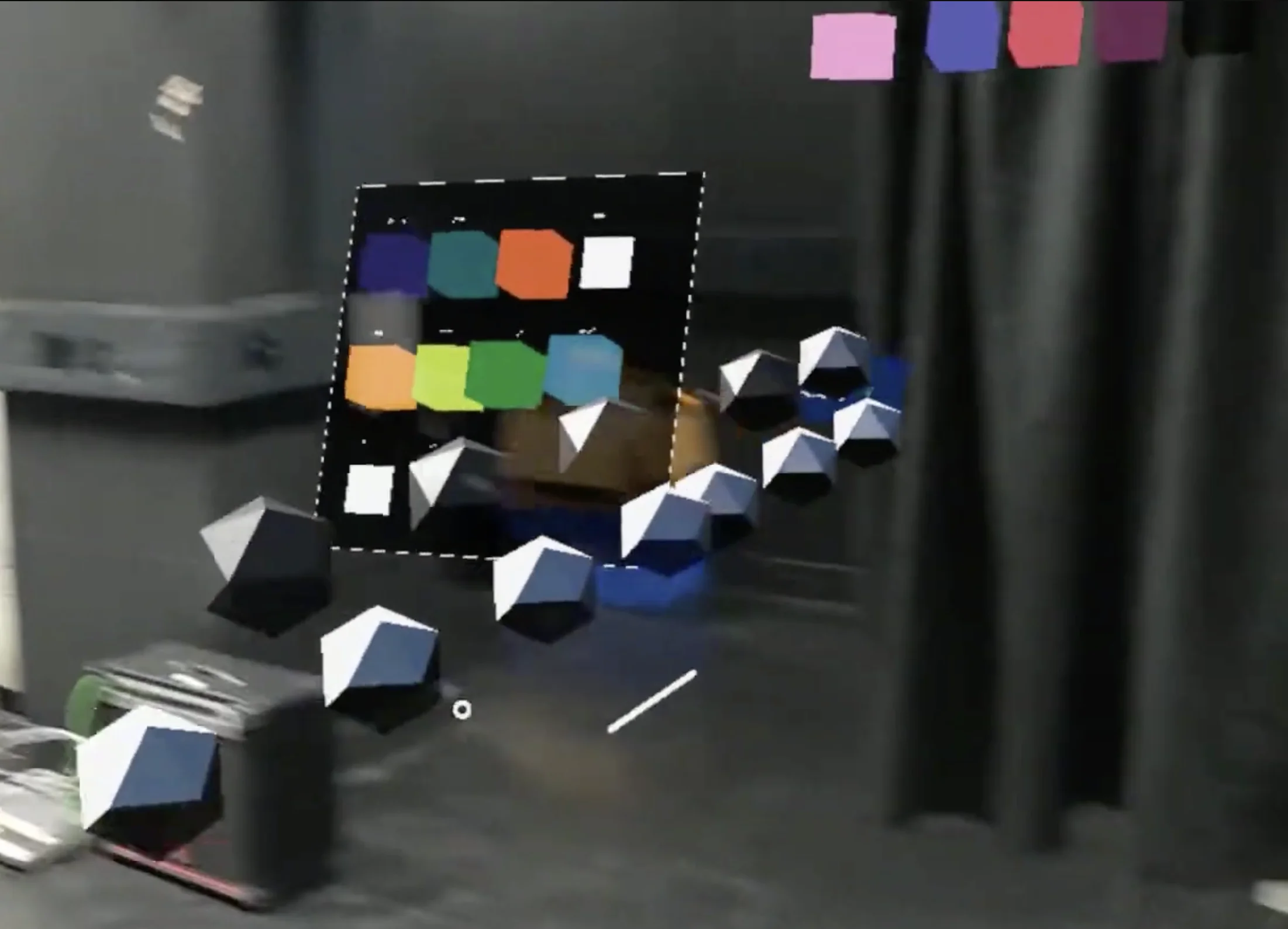

Musical inteface and mapping controls captured from the XR device in mixed reality

Musical inteface and mapping controls captured from the XR device in mixed reality

What I built

The prototype was implemented in Unreal Engine and used a piano-inspired octave of 12 virtual musical objects floating in mixed reality. Each object could be touched, grabbed, and dragged through space.

The playing model split musical interaction across both hands:

- the right hand triggered and manipulated notes and arpeggios

- the left hand controlled chord quality through recognised hand poses

- the whole instrument could be repositioned, rotated, and scaled with two-handed gestures

Sound generation happened externally through OSC-to-MIDI control into a DAW instrument, while the XR device handled interaction, visuals, and hand tracking.

Motivation

This was not meant to be a finished instrument. It was an exploratory prototype study designed to surface design questions for future XR musical instruments:

- What instrument sizes and spacings feel playable in mixed reality?

- Can hand poses become a meaningful control layer for harmony and expression?

- Is lightweight real-time pose recognition robust enough for performance?

- Can interactive machine learning help performers personalise the system to their own hands?

To explore that last point, I integrated an IML workflow that let performers record their own hand poses, train a classifier on-device, and map those poses to different chord types. That made the instrument adaptive rather than fixed: players could reshape the control vocabulary to match their own hands and mnemonic strategies.

Study findings

I evaluated the prototype with 10 musically experienced participants. The study showed that the concept was promising, but also made several limitations very clear.

- Compactness matters: participants consistently wanted a smaller, more body-centred instrument.

- Familiar musical logic helps, but a piano-like layout in mid-air does not automatically feel like a piano to play.

- Pose-based chord control was compelling when performers could personalise it through IML.

- Tracking reliability, lack of haptics, and limited field of view made sustained performance harder.

- Several participants wanted the interface anchored to a physical surface for better timing, confidence, and reduced fatigue.

Those findings directly shaped the design direction of later projects, especially the move toward more compact, surface-anchored XR instruments and more robust sensing pipelines.

Legacy in the PhD

This project was the starting point for several later themes in my research:

- ergonomic design of XR musical instruments

- personalised hand-pose recognition

- mixed reality as more than a novelty interface

- the need for tactile or spatial anchoring in musical XR

- the gap between promising interaction ideas and reliable musical control

In that sense, this NIME paper is the point where the broader research trajectory really began.

Links

Paper • Code • IML demo video