Netz

Netz is an XR musical instrument that grew directly out of my PhD research in AI and Music. For more information check out the website at netzxr.com. The instrument itself emerged from a research process focused on musical control, ergonomics, hand tracking, and expressive interaction in mixed reality.

Netz was co-created through a longitudinal participatory design process with a professional keyboard player and music producer. Across ten design sessions, we developed an instrument that keeps the playing area compact, anchors interaction to a tabletop for better physical grounding, and uses hand- and finger-level gestures for nuanced musical control.

Research idea

The core design goal behind Netz was to move away from large, fatiguing mid-air XR interfaces and toward something that feels more playable, learnable, and expressive for musicians. The result is a compact mixed-reality instrument that sits on a physical surface while extending interaction into 3D space above it.

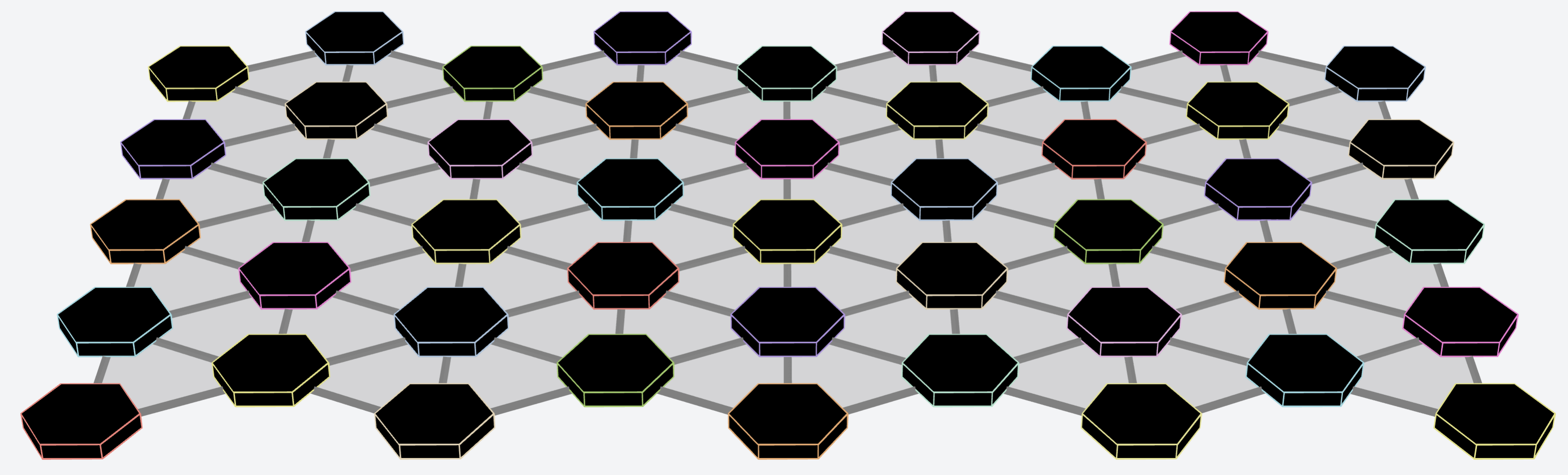

Its note layout is based on a custom Tonnetz-inspired structure designed for harmonic exploration. That makes it possible to play notes, scales, chords, and chord inversions through spatial patterns rather than a traditional piano keyboard layout. Additional “bridge” notes were introduced during the design process to make major and minor scale playing more practical with one hand.

Screenshot

Screenshot

How it works

- Surface-anchored mixed reality: the instrument is placed on a real table, giving the player a stable physical reference while still allowing 3D gestures above the surface.

- Expressive note layout: the interface uses an isomorphic harmonic layout tailored for learning chord shapes, scales, and transposition.

- Hand-pose control: different hand poses are used to distinguish between single notes, chords, and inversions.

- Continuous expression: wrist and finger movements can shape pitch, timbre, dynamics, and portamento-like transitions between notes.

- On-device sound engine: the prototype was built as a self-contained instrument with synthesis running directly on the XR headset.

- Interactive machine learning: a personalised hand-pose model was integrated to reduce sensing errors and improve musical control.

On the product side, the current Netz website describes full XR integration, hand tracking and gesture recognition, DAW integration, customisable gestures, MPE-style note expression, and support aimed at Meta Quest headsets, with Apple Vision Pro support planned.

Under the hood

The prototype described in my thesis was implemented in Unity for Meta Quest passthrough mixed reality. It combines:

- a custom tabletop XR interface

- collision-driven note interaction

- a 3,7,4-Tonnetz-inspired note structure

- hand-tracking-based gesture control

- an on-device wavetable synthesizer

- MIDI MPE messaging for expressive per-note control

One major theme of the project was dealing with system errors in XR hand tracking. Netz became the basis for my later work on using interactive machine learning and, beyond that, multimodal sensing to make XR musical instruments more reliable and expressive.

Outcomes

Netz has already led to both academic and commercial outcomes.

- It won the 2023 MIDI Innovation Awards in the category Software Prototypes and Non-Commercial Products.

- It was showcased at NAMM 2024 as part of the MIDI Innovation Awards winners.

- It formed the basis of multiple research publications on XR musical instruments, hand tracking, and interactive machine learning.

- It supported follow-on commercial exploration through Queen Mary Innovation Impact Fund support and the Innovate UK ICURe Discover and ICURe Explore programmes.

The project was also featured in a Reuters article on AI and music.

Publications and links

Website • Demo video • When XR Meets AI (AES 2024) • Combining Vision and EMG-Based Hand Tracking (CMMR 2023) • Reducing Sensing Errors in a Mixed Reality Musical Instrument (VRST 2023)