Combining Vision and EMG-Based Hand Tracking for Extended Reality Musical Instruments

This paper grew out of a practical problem in my XR musical instrument research: camera-based hand tracking works well until the fingers become occluded, which is exactly when musical interaction often needs the most precision. In this project, I explored a multimodal hand tracking pipeline that combines vision-based tracking from an XR headset with surface electromyography (sEMG) from a Myo armband.

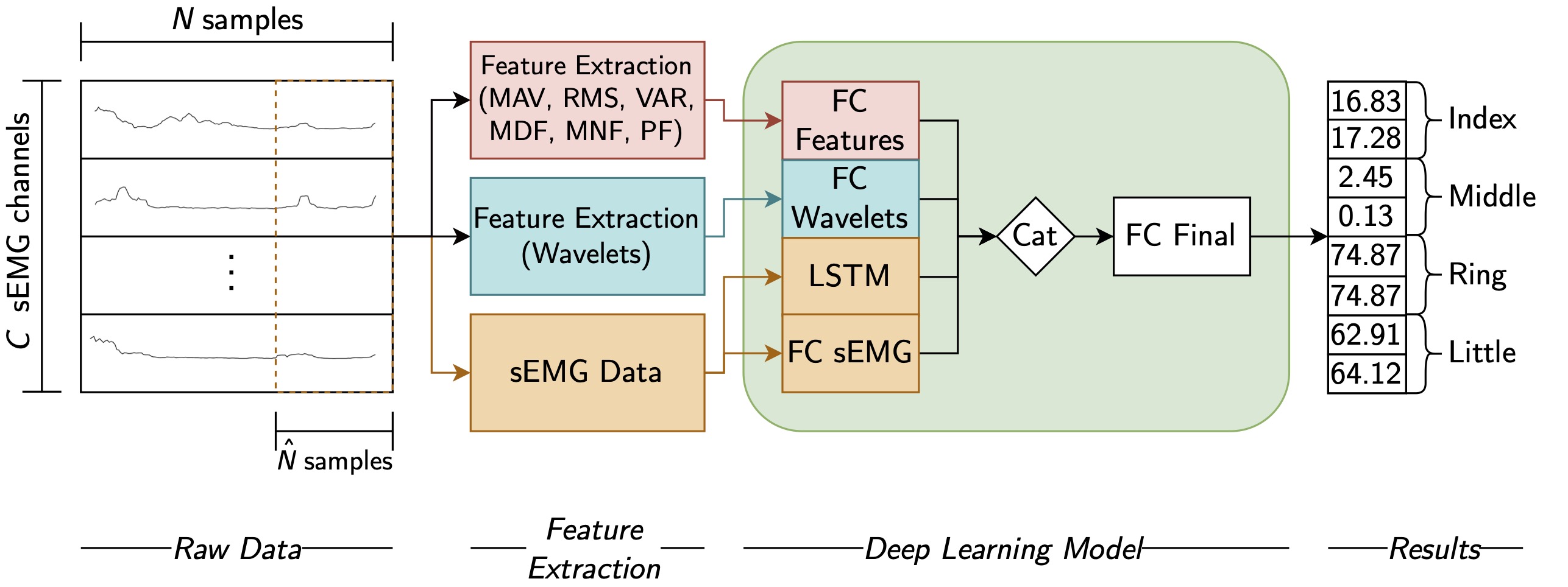

The key idea is simple: the headset provides the global hand position and orientation, while a deep learning model predicts finger joint rotations from forearm muscle activity. That means the system can keep estimating finger articulation even when the camera loses a clear view of the hand.

Data preprocessing, feature extraction, and model pipeline

Data preprocessing, feature extraction, and model pipeline

What the paper does

- combines Meta Quest 2 hand tracking with Myo armband sEMG sensing

- estimates the MCP and PIP joint rotations of the index, middle, ring, and little fingers

- uses a deep learning model to map windowed sEMG features to finger joint angles

- evaluates the multimodal system against the headset’s native vision tracking

- uses Leap Motion as a reference tracker for finger joint angle comparisons

This was a proof-of-concept study, but it established an important direction for the rest of my PhD: if vision-based XR tracking breaks under self-occlusion, adding a second sensing modality can make musical interaction more reliable.

Publication note

The version linked below under the original CMMR title is currently only publicly available as a preprint. After the conference, the organisers allowed authors to further revise and extend their papers, and a longer version of this work was later published as a Springer LNCS book chapter:

Multimodal Hand Tracking for XR Musical Instruments Using Electromyography

Music and Sound Generation in the AI Era (LNCS 15236), first published online on 10 October 2025.

Main result

The multimodal approach often produced lower finger-tracking error under self-occlusion than vision-only tracking. In full-view conditions, vision tracking remained competitive or better in several tasks, which is expected. The value of the approach is therefore not that sEMG replaces vision, but that it complements it when camera tracking becomes unreliable.

That distinction is important: the overall tracking pipeline is multimodal, but the finger-pose model itself is unimodal and uses only sEMG-derived input features.

Relevance for musical XR

For XR musical instruments, small tracking failures quickly become musical failures: wrong notes, unstable control, broken gestures, and loss of performance flow. This paper was one of my first serious attempts to address that problem at the sensing level rather than only at the interaction-design level.

It also directly fed into later work on:

- more reliable control for Netz

- interactive machine learning for gesture interpretation

- larger-scale investigations into multimodal hand tracking for XR musical performance

Video

The video below shows a side-by-side comparison between the vision-only tracker and the multimodal system.

Recognition

The paper was published at CMMR 2023 and was nominated for the best paper award.

Links

CMMR paper (preprint) • Extended LNCS book chapter • Repository record • Video • sEMG Unity code • Python pipeline • Unity bridge